Using Blur to Affect Perceived Distance and Size

Abstract

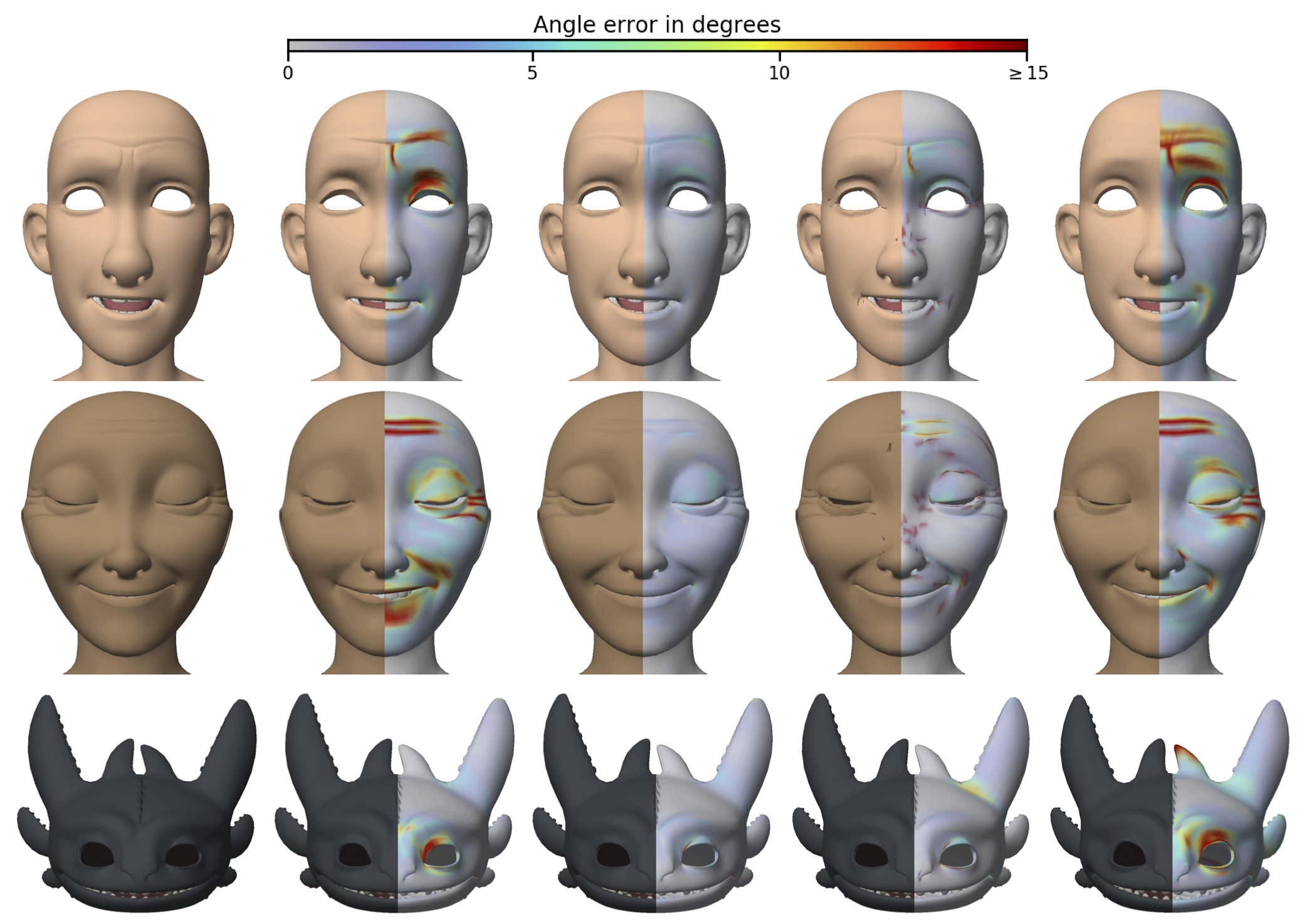

We present a probabilistic model of how viewers may use defocus blur in conjunction with other pictorial cues to estimate the absolute distances to objects in a scene. Our model explains how the pattern of blur in an image together with relative depth cues indicates the apparent scale of the image’s contents. From the model, we develop a semi-automated algorithm that applies blur to a sharply rendered image and thereby changes the apparent distance and scale of the scene’s contents. To examine the correspondence between the model/algorithm and actual viewer experience, we conducted an experiment with human viewers and compared their estimates of absolute distance to the model’s predictions. We did this for images with geometrically correct blur due to defocus and for images with commonly used approximations to the correct blur. The agreement between the experimental data and model predictions was excellent. The model predicts that some approximations should work well and that others should not. Human viewers responded to the various types of blur in much the way the model predicts. The model and algorithm allow one to manipulate blur precisely and to achieve the desired perceived scale efficiently.

Citation

Robert T. Held, Emily A. Cooper, James F. O'Brien, and Martin S. Banks. "Using Blur to Affect Perceived Distance and Size". ACM Transactions on Graphics, 29(2):19:1–16, March 2010.

Supplemental Material

Explanatory Video

SIGGRAPH 2010 Talk Slides (PDF)

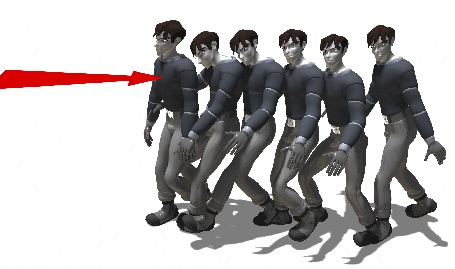

Results for the seven subjects in the psychophysical study