Three Techniques for Rendering Generalized Depth of Field Effects

Abstract

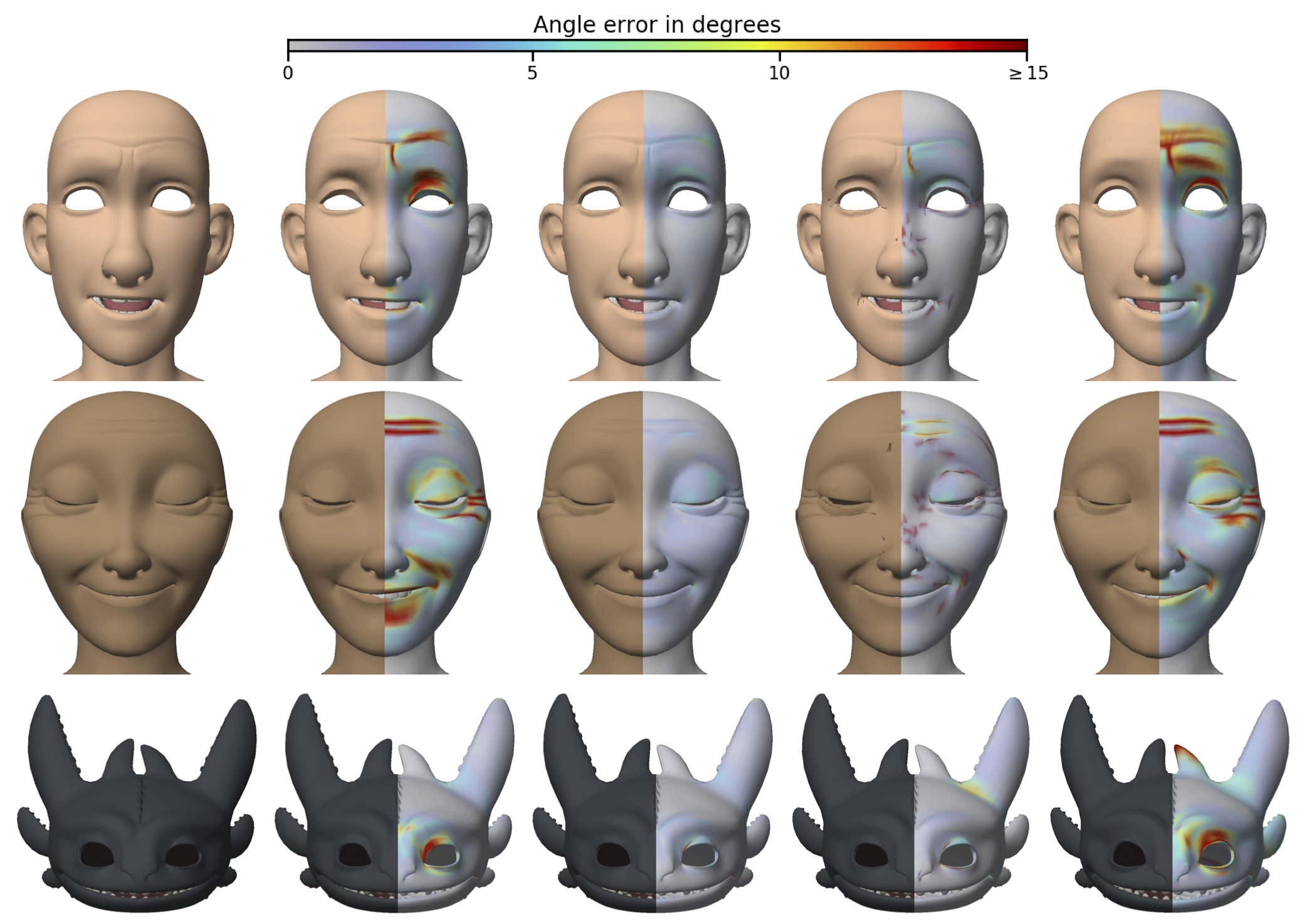

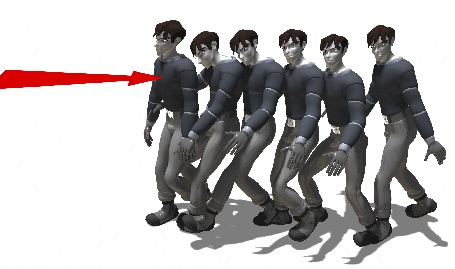

Depth of field refers to the swath that is imaged in sufficient focus through an optics system, such as a camera lens. Control over depth of field is an important artistic tool that can be used to emphasize the subject of a photograph. In a real camera, the control over depth of field is limited by the laws of physics and by physical constraints. Depth of field has been rendered in computer graphics, but usually with the same limited control as found in real camera lenses. In this paper, we generalize depth of field in computer graphics by allowing the user to specify the distribution of blur throughout a scene in a more flexible manner. Generalized depth of field provides a novel tool to emphasize an area of interest within a 3D scene, to select objects from a crowd, and to render a busy, complex picture more understandable by focusing only on relevant details that may be scattered throughout the scene. We present three approaches for rendering generalized depth of field based on nonlinear distributed ray tracing, compositing, and simulated heat diffusion. Each of these methods has a different set of strengths and weaknesses, so it is useful to have all three available. The ray tracing approach allows the amount of blur to vary with depth in an arbitrary way. The compositing method creates a synthetic image with focus and aperture settings that vary per-pixel. The diffusion approach provides full generality by allowing each point in 3D space to have an arbitrary amount of blur.

Citation

Todd J. Kosloff and Brian A. Barsky. "Three Techniques for Rendering Generalized Depth of Field Effects". In Proceedings of the Fourth SIAM Conference on Mathematics for Industry: Challenges and Frontiers (MI09), pages 42–48, October 2009.